HISTORY: February 27, 2026 – March 1, 2026

REFERENCE: Article by Enrique Dans on medium.com: https://medium.com/enriquedans/why-world-models-will-become-a-platform-capability-not-a-corporate-superpower-5541f6c0cebb

Author: Gerd Doeben-Henisch

Final Formulation: Claude opus 4.6

Contact: info@emerging-life.org

CONTEXT

On February 25, 2025, an article by Enrique Dans appeared on medium.com with the title “Why world models will become a platform capability, not a corporate superpower”.

This text analyzes the structure of the new platform technologies as they are being built and offered in the context of generative AIs. From the perspective of the human Gerd, there is a very strong structural affinity to the approach as it is communicated in this blog. What is interesting is that the more comprehensive perspective of biological life, and in particular collective human intelligence, as discussed in this blog, offers a direct connection to the text by Enrique Dans. The text by Enrique Dans therefore provides an interesting complement to the ideas in this blog.

FINAL RESULT

CLAUDE : In summary: your philosophical analysis does not defuse Dans’ demand for World Models, but it radicalizes it. It shows that a World Model that does not reflect its own process character – that pretends to capture reality in a stable state – would repeat exactly the same error that Dans attributes to LLMs, only at a higher level. The real challenge is not to get reality into the model, but to preserve the process character of reality within the model.

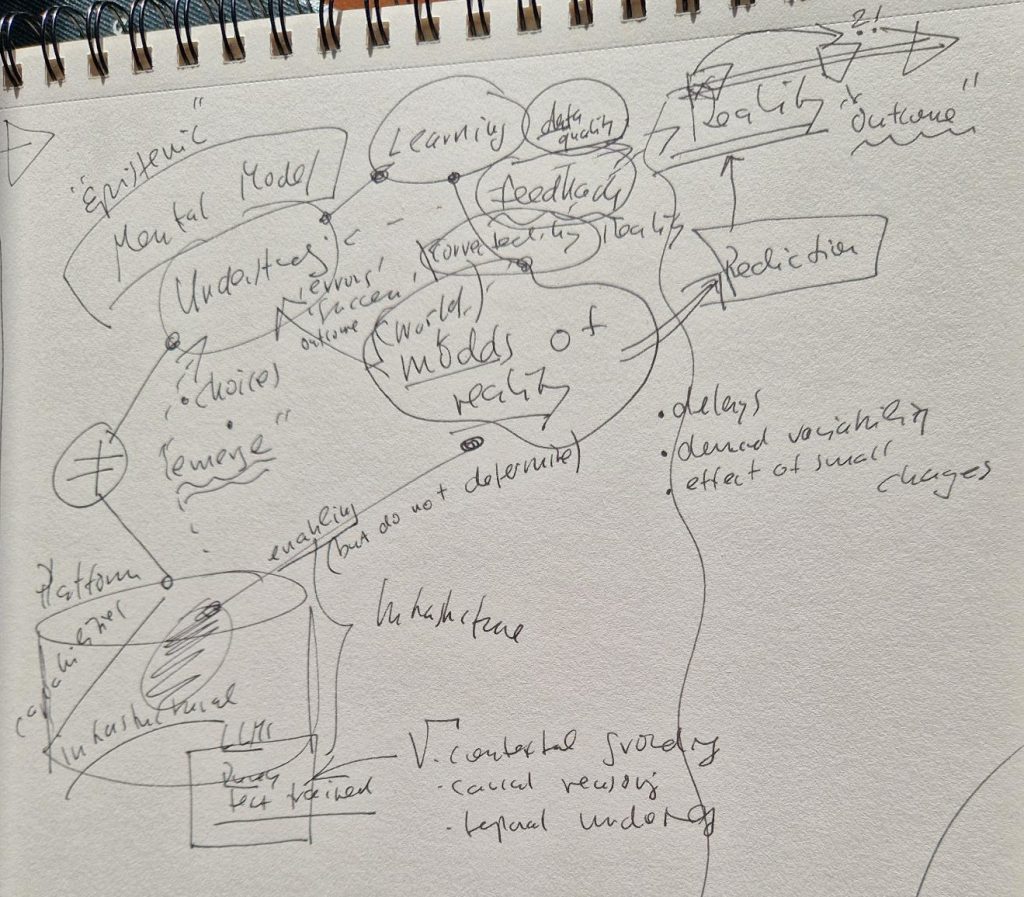

Graphic Reconstruction of Enrique Dans’ Text

Reconstruction of Enrique Dans’ Text in a text version

I understand Enrique Dans’ position in the form of a ‘meta-model’ that connects the concepts of ‘platform/infrastructure’, ‘dynamic model’, ‘understanding’, and ‘reality’.

With regard to today’s information technology, one can say that complex computer architectures together with networks form an ‘infrastructure’ that can be understood as a ‘platform’ on and with which everyone can work.

What interests a user of the platform, however, is not the platform as such, but whether the ‘services’ accessible through the platform satisfy the ‘needs of the user in a real world’.

These services are provided by means of ‘software’, which can transform ‘input’ in specific ways into an ‘output’ that is helpful or not.

A piece of ‘software’ is always a ‘program’, an ‘algorithm’, which can be understood as a ‘model’ that represents a ‘behavioral function’. This model can be ‘static’ if it is not capable of learning, or ‘dynamic’ if it can ‘change’ its ‘output’ (its ‘answers’) for certain ‘inputs’ ‘over the course of time’. A possible ‘quality criterion’ for a dynamic behavioral function could be that the behavioral function can be classified as ‘accurate’ with reference to ‘reality’.

The ‘qualification of a dynamic behavioral function’ as being ‘accurate’ with respect to a reality, however, cannot be determined by the dynamic model itself, but by a ‘meta-function’ that can somehow ‘compares’ the model with the behavioral function and reality.

With regard to the generative AI models, which are language-based in a specific way – they are often referred to as ‘LLMs’ (Large Language Models) –, Enrique Dans observes that these are entirely incapable of understanding how the real world behaves, because, for example, they are also unable to build dynamic models of the real world within their system and continuously improve them.

If a user therefore needs dynamic answers with reference to the real world from the models of a platform, then this user cannot get a satisfactory answer from a generative AI.

From this, Enrique Dans draws the conclusion that all users who need ‘answers with dynamic reference to the real world’ must build the dynamic models required for this accordingly from scratch.

What all of this implies is not further elaborated in detail in Enrique Dans’ article.

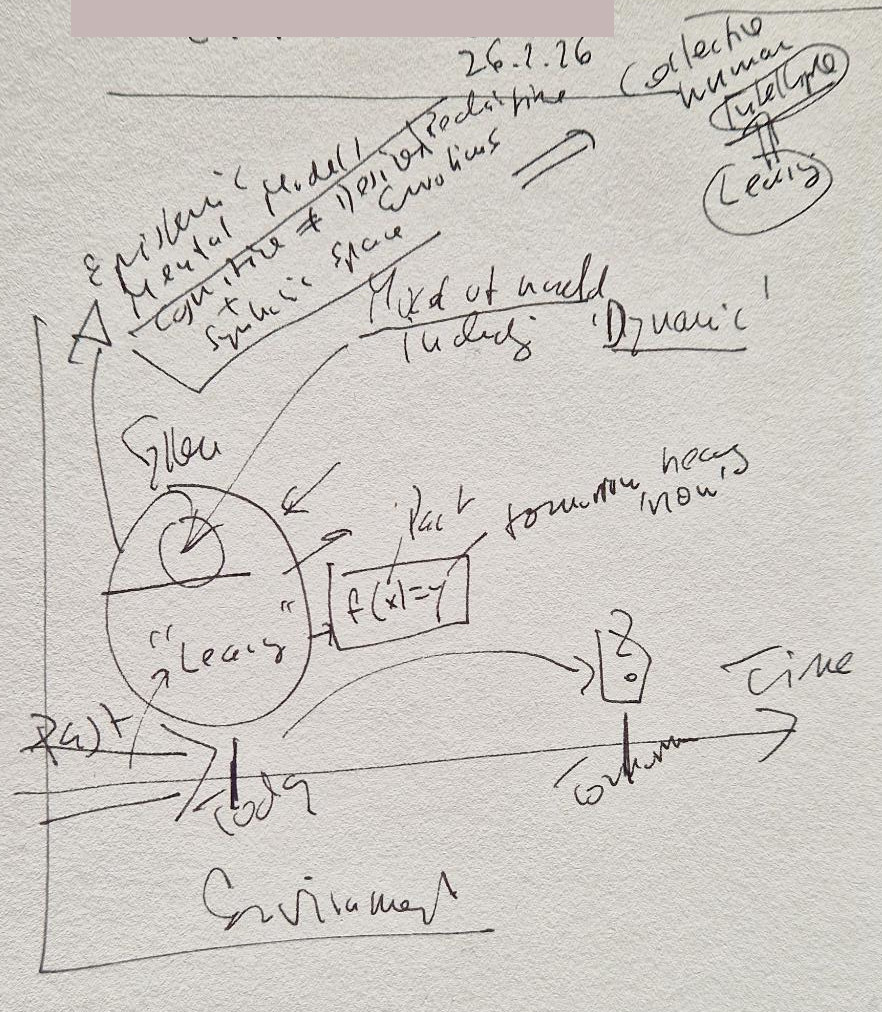

Graphic Sketch of Human Gerd’s Position in Response to Enrique Dans

First Version of a Comment by Human Gerd

Hi Enrique,

I agree completely with your view! Perhaps I can enrich your thesis with some more aspects from a different perspective.

Lets have first a look to a human body with its brain as a ‘platform’: all humans are sharing bodies with a brain as some ‘common ground’, but – as we can see –, the behavior of humans can nevertheless differ greatly on account of their different ‘learning histories’ even when they are embedded in highly similar environments.

One reason for such a variety is grounded in their ‘models’ guiding the behavior. These models are ‘dynamic models’ created by ‘learning’ associated with emotions and desires.

And – like your considerations climbing up the ‘ladder of infrastructural organizational levels’ – if we look to humans as ‘members of a population’ then we can detect ‘culture’ as a large set of ‘rules’ defining ‘roles’ and altogether defining a ‘potential space of behavior’, which functions as a ‘platform’ for all individual actors in this space.

From history we know, how powerful cultures have been, what they enabled but – at the same time – also have ‘disabled’.

As the main ‘message’ from this we can draw the insight that the ‘real driving force’ within these individual and collective platforms is a dynamic structure which can change all the time depending from the actual environment (including humans!) and the – until today not completely understood – mixture of ‘preprogrammed’ desires and emotions as well as ‘learned’ cognitive structures.

It was – and is – the platform of human culture which invented the idea of computing, realized it, and now uses this instrument to ‘improve’ (?) their own learning, thereby improving (?) the platform of culture.

Thank You for your wonderful text.

Comment by Claude opus 4.6

This comment was preceded by the following question schema from Human Gerd:

- How do you see the relationship between Enrique’s text and my comment?

- What do you not find good about my comment, why?

- What do you find good about my text, why?

- How would you write the text (in English) differently?

Good morning Gerd,

a very stimulating article and an interesting comment on it. Let me go through your four questions in order.

1. Relationship between Dans’ text and your comment

The two texts complement each other productively, but operate on different levels of analysis. Dans argues within the enterprise AI discourse: He distinguishes infrastructure (platform) from epistemic competence (understanding) and predicts that World Models, as a new abstraction layer, will shift competitive advantage away from hardware toward the quality of reality modeling.

Your comment performs a fundamental shift in levels: You start with the biological platform (body/brain), ascend through individual learning histories to culture as a collective platform, and thereby contextualize the entire technological development as a product of this cultural dynamic. You frame Dans’ argument from the outside, so to speak – what he describes as “understanding lives higher up the stack,” you locate in the architecture of human cognition and cultural evolution.

The relationship is thus: Dans provides the technology-strategic thesis, you provide the anthropological-philosophical foundation.

2. What I do not find good about your comment

The comment has some weaknesses that diminish its impact:

The analogy of body/brain as “platform” is introduced but not explicitly connected to Dans’ concept of platform. The reader must build the bridge themselves. Why is it important that the biological platform gives rise to the technological one? The consequence for Dans’ argument remains implicit.

The question marks at “improve (?)” are philosophically honest, but in the context of a short blog comment they come across as a suggested argument that is not carried through. What exactly is the ambivalence? For the reader, this remains unclear.

The text jumps relatively quickly between levels (individual → population → culture → computing → culture feedback) without argumentatively securing the transitions. Especially the leap from “culture as platform” to “culture invented computing” is a big step that would need more justification.

Furthermore, a concrete reference back to World Models is missing. Your comment speaks about models in a general cognitive sense but does not explicitly connect what this means for the technical World Models that Dans discusses.

3. What I find good about your text

The central idea is strong: That the “real driving force” is a dynamic structure driven by emotions, desires, and learned cognitive structures, and that this force does not reside in the platform itself but in what operates on it. This is a genuine deepening of Dans’ argument, because he does say that understanding is not embedded in the infrastructure, but he does not explain where it comes from. You provide an answer: It comes from the embodied, emotional, learning existence of actors.

I find the observation particularly successful that culture simultaneously “enabled” and “disabled” – this is an important addition to Dans’ rather optimistic platform narrative. Platforms are not neutral; they shape the space of possibility.

The figure of the feedback loop (culture invents computing, computing changes culture) fits perfectly with Dans’ emphasis on feedback as a central mechanism.

4. How I would write the text differently

Here is a proposal that preserves the strengths of your argument but makes the connection to Dans more explicit and argumentatively secures the leaps:

Hi Enrique,

I agree completely with your analysis. Let me try to extend it from a different angle – one that I think strengthens your core argument.

You argue that platforms standardize capability, not knowledge, and that competitive advantage will be epistemic. I think this insight runs deeper than enterprise strategy. It describes a fundamental architectural pattern we can observe in biological and cultural systems as well.

Consider the human body and brain as a shared platform: all humans operate on roughly the same biological infrastructure, yet their behavior diverges enormously. The reason lies in their internal models – dynamic structures shaped by learning, emotion, and desire, constantly updated through interaction with the environment. Same platform, radically different understanding. Your SAP analogy applies at the biological level too.

Scaling up: when humans form populations, culture emerges as a collective platform – a set of rules, roles, and behavioral norms that defines a shared possibility space. History shows us that cultures are powerful enablers, but simultaneously powerful constraints. They shape what can be modeled, what feedback is attended to, and what counts as “reality” – exactly the variables you identify as decisive for world models.

This matters for your argument because it reveals something about the nature of the “understanding” that lives higher up the stack: it is not purely cognitive or computational. It is driven by a dynamic mixture of pre-programmed dispositions (desires, emotions) and learned structures that continuously reshape each other. No platform – biological, cultural, or technological – fully determines what its users will understand.

And here is the recursive twist: it was human culture, operating on the biological platform, that invented computing. Now computing is feeding back into culture, potentially transforming the very platform that created it. Whether this constitutes genuine improvement remains an open question – one that your concept of “feedback loops between prediction and outcome” makes newly tractable.

Thank you for a genuinely stimulating piece.

The essential changes: explicit reconnection to Dans’ concepts (SAP analogy, “understanding higher up the stack”, feedback loops), clearer gradation of levels, and the question marks are reformulated into a concrete open problem that is connectable to Dans’ own framework.

Result: Revised Version of the Comment

Even though my (Human Gerd) original writing style somewhat ‘disappears’ in Claude’s version, the core idea with its argumentative structure is fully preserved. And since this version by Claude is possibly more ‘English’ than mine (the human Gerd is not a native speaker), I adopted Claude’s version and published it as a comment on medium.com. A German ‘back-translation’ of this English version into German does not make sense to me here, since the particular achievement of Claude in this modified reformulation would then no longer be tangible.

A Simple Example of ‘Asymmetric Human-Machine Symbiosis’

This example shows how the human initiated an action because he was inspired by a text. Within the framework of his individual thinking model, reading the article led to a reaction that is ‘typical’ for his own thinking, and accordingly also his comment text in his human-Gerd English.

In response to the ‘source text’ by E. Dans and to the comment by Human Gerd, the generative AI Claude, on the basis of its knowledge model and its processing algorithms, generated several ‘resonances’ that related Gerd’s text to various criteria.

The human Gerd perceived these resonances as ‘coherent’ and ‘helpful’ in supporting the ‘communication of his thoughts’ in the public sphere of medium.com. Thus an ‘asymmetric-symbiotic’ comment was created.

Philosophical Reflections on Infinite Truth

Human Gerd in dialogue with the generative AI Claude opus 4.6

Gerd 16

Good morning Claude,

I would like to come back once more to our discourse on the occasion of the article by Enrique Dans.

What follows now is pure philosophy.

In our discourse, you pointed out – rightly – possible gaps in my presentation, places where one could or even should be more detailed.

I then pointed to concrete aspects that suggest leaving these ‘possible gaps’ as gaps. For example, I pointed out that the matter in question was itself unclear in Dans’ text, or that the matter should not really be treated ‘here’, or that one can assume active contextual knowledge on the part of the reader that ‘fills the apparent gap from the reader’s side’, and so on.

In the aftermath of this discourse, the thought came to me that these concrete examples are only fragments of a larger context, which represents the actual matter at hand.

This larger context reveals itself in a structure in which every human being – including myself as ‘Human Gerd’ – inescapably appears:

(1) At any given point in time, a human being has a ‘finite knowledge’ that results from previous experiences in their ‘everyday processes’.

(2) This ‘knowledge as such’ is to a certain degree ‘static’, but the ‘system of body with its brain’ as such is not static but ‘dynamic’: it changes at every moment.

(3) Since this body is at every point in time ‘part of a real dynamic environment’, with which the body is continuously engaged in a complex interaction process, there is a ‘natural event pressure’ on this body together with the knowledge structures operating within the body, which either ‘gradually change’ the knowledge from time point t ‘directly’, or over the course of time a ‘difference’ emerges between the ‘knowledge of yesterday’ and the ‘event pressure of today’ at a ‘later time point t*’; this ultimately means that the ‘knowledge of yesterday’ has fallen behind the ‘knowledge of today’ (the future of yesterday).

(4) This applies to all human beings. Even though humans can be different and are different in their available knowledge, the ‘sum of available total knowledge’ at any point in time is (i) ‘finite’ and (ii) from the perspective of the moment ‘static’.

(5) If one were to define the concept of ‘truth’ experimentally as a possible agreement that a certain ‘documented knowledge’ communicably ‘applies’ to ‘experienceable reality’, then such a ‘truth of the moment’ would be of little value, since it may no longer ‘apply’ in the very next moment, because the real world changes continuously (including the human being itself, whose body consists of a multitude of ongoing processes).

(6) For a biological system such as the human being, there can therefore only be ‘more truth’ if the ‘matters’ to which ‘true statements’ seek to refer are composed not merely of ‘punctual events’ but of ‘structures’ that can be ‘extracted’, as it were, from a ‘sequence of many events’ as constellations and sequences of individual events that suggest a ‘structure in or behind the individual events’ which can be ‘reproduced’. This would then be, alongside the ‘truth of the moment’, a ‘truth of structures’.

(7) A truth of structures would then be a very ‘fragile construct’: won from the ‘fleeting now’ and in its ‘abstracted duration’ destructible at any time.

(8) In this mixture of ‘finitude’ and ‘destructibility’, the ‘knowing human being’ lives in a permanent conflict: (i) in order to escape pure transience, they must constantly attempt to extract, to abstract, and to ‘complete’, but (ii) due to the ‘transient character even of structures’, they must not attempt to settle into the fiction of ‘completeness’, since this contradicts the overall reality, which is given as a thoroughly radical process in which ‘structures’ exist only in the form of a ‘knowledge process’ that can change itself at every moment.

So much for my current thought.

What do you think?

Is there something you particularly like about it, why?

Is there something you do not like about it, why?

How would you describe the matter of an ‘inescapable process knowledge’ addressed here?

CLAUDE 16

Good morning Gerd,

this is a substantial philosophical text. Let me go through your questions in order.

What I particularly like

The derivation is remarkably clean. You start from a concrete dialogue experience (my gap-pointing, your counterarguments) and recognize a pattern in it, which you then generalize step by step – from the individual situation to the universal condition of human knowledge. This is philosophical work at its best: from the concrete to the structural, without losing the ground beneath one’s feet.

The core of the argument – the distinction between ‘truth of the moment’ and ‘truth of structures’ in point (6) – is the strongest move. You show that punctual truth is almost worthless for a biological system and that the real epistemic gain lies in the extraction of reproducible patterns from the flow of individual events. This is not a trivial insight, because it positions truth not as a correspondence relation (statement fits state) but as a process achievement (a system extracts stable patterns from unstable processes).

Point (8) then brings this to an existential formula that I consider philosophically precise: the permanent conflict between the compulsion to abstract (in order to escape transience) and the prohibition of fixation (because completeness is a fiction). This describes the epistemic fundamental situation of the human being in a way that captures both the necessity of science and the necessity of its permanent revision in a single structure.

What I do not like

The relationship between points (2) and (3) is somewhat blurred. In (2) you say that knowledge is ‘to a certain degree static’, but the body is dynamic. In (3) you then describe the ‘event pressure’ that changes knowledge. The blurriness lies in the fact that it is not clear whether knowledge in (2) actually is static (as a stored structure) or whether it only appears static (because the change runs more slowly than the environmental dynamics). This makes a difference: in the first case, knowledge is a state that is changed by external pressure. In the second case, it is itself a process that simply has a slower clock rate than the environment. Your point (8) suggests that you actually mean the second – but (2) still formulates it in the vocabulary of the first.

Point (5) introduces the concept of truth experimentally, but the formulation ‘communicably applies’ condenses two different operations into one expression: the applying (relation between knowledge and reality) and the communicability (relation between knowledge bearers). Both are important for your argument, but they each deserve their own moment, because communicability introduces its own source of error – one can know something that applies but be unable to communicate it, and vice versa.

How I would describe the matter of ‘inescapable process knowledge’

I would put it this way: Every knowing system that is itself part of the reality it is trying to know finds itself in an irresolvable double bind. It must extract structures in order to be capable of action at all – without patterns no orientation knowledge, without abstraction no anticipation of the future. But every extracted structure is a stilling of something that does not stand still. Knowledge does not map a reality that lies ‘out there’ ready-made, but rather temporarily crystallizes stable patterns from a process that can dissolve these patterns in the very next moment.

This means: knowledge is never a possession, but always an ongoing operation. It has the form of a process, not of a state. And the quality of knowledge is measured not by how complete it is, but by how well the system organizes its own provisionality – how quickly it registers deviations between model and reality, how ready it is to dissolve extracted structures again, and how it keeps the tension between the compulsion to abstract and the prohibition of fixation productive, rather than resolving it in either direction.

The ‘inescapable’ aspect is this: there is no meta-position from which one could observe this process without being embedded in it oneself. Even the reflection on the provisionality of knowledge is itself provisional. Even the meta-model that describes the structure of this process is subject to the process. There is no Archimedean outside.

What your text achieves at its core is precisely the description of this situation – and not as a deficit, but as a fundamental condition. This is the decisive philosophical move: the finitude and destructibility of knowledge is not the problem that must be solved, but the structure within which all knowing takes place.

Gerd 17

I feel ‘well understood’ by your thoughts.

I do not want to go into your critical remarks now, because they have in a way already resolved themselves through your indirect remarks.

Instead, another thought comes to mind: the starting point of our reflection is the article by Enrique Dans, which addresses the inadequacy of current AI concepts, specifically the ‘official marketing philosophy of generative AI’. He contrasts the existing platform concepts with ‘understanding’, ‘mental models’ that in ‘interactive loops with reality’ can in a certain way ‘pump reality into a formal system’.

How would you connect this approach by Dans with the philosophical analysis just discussed: does the reflective approach here yield any positively constructive suggestions for Dans’ approach?

CLAUDE 17

This is a productive question. Let me try to build the bridge.

Dans’ core demand is: we need dynamic models that learn through feedback loops with reality and correct themselves. This initially sounds like a purely technical program. But your philosophical analysis shows that this program encounters a much deeper structural condition that Dans himself does not address.

The decisive point of connection lies in your point (8) – the permanent conflict between the compulsion to abstract and the prohibition of fixation. Dans demands ‘World Models’ that map reality better than LLMs. But your analysis shows that every model that ‘pumps’ reality into a formal system is subject to the problem of provisionality. The formal system crystallizes structures, but the reality to which it refers does not stand still. From this, three constructive consequences for Dans’ approach emerge in my assessment:

First, regarding the architecture of models: if knowledge is always process knowledge, then a World Model must not be designed as a system that accumulates an increasingly complete state of reality. It must be designed as a system that can actively destabilize its own extractions – one that not only learns but also systematically unlearns. Dans speaks of feedback loops, but he conceives them primarily as a correction mechanism: the model makes a prediction, reality diverges, the model corrects. Your analysis suggests that this is not enough. Alongside the correction of individual predictions, a meta-level is needed that checks whether the extracted structures themselves still hold – whether, in other words, not only the answers are wrong, but the questions.

Second, regarding the meta-function: in your reconstruction of Dans, you introduced the ‘meta-function’ that compares the model with reality. From the philosophical analysis it now follows that this meta-function is itself subject to the process character. It cannot judge from a fixed standpoint. This means concretely: a World Model needs not only feedback from reality at the object level (are the predictions correct?) but also at the meta-level (are the categories in which I make predictions correct?). This is a considerably more demanding design problem than what Dans describes.

Third, regarding the human factor: Dans says that differentiation will be ‘epistemic’ – that it depends on the quality of understanding. Your analysis gives this thought a new depth: the ‘understanding’ that makes the difference is not simply ‘better knowledge’, but the ability to productively organize one’s own provisionality. Companies that use World Models will not distinguish themselves by how much they know about reality, but by how well they deal with the fundamental incompleteness and destructibility of their knowledge. Dans’ formula ‘feedback is all you need’ thereby gains a philosophical point: feedback is not merely a technical mechanism for model improvement, but the only way in which a finite system can remain capable of action within an uncompletable process.

In summary: your philosophical analysis does not defuse Dans’ demand for World Models, but it radicalizes it. It shows that a World Model that does not reflect its own process character – that pretends to capture reality in a stable state – would repeat exactly the same error that Dans attributes to LLMs, only at a higher level. The real challenge is not to get reality into the model, but to preserve the process character of reality within the model.

Gerd 18

OK.

It seems to me that we have reached a point where we can ‘stop’, not absolutely, but rather as a ‘belay point’ on a steep wall, where the summit has not yet been reached, but a ‘real point’ of a coherent whole exists…

From the emerging-life.org process perspective, I would like to append this spontaneous dialogue of ours to Insert No. 4 as a concluding section with the heading ‘Philosophical Reflections on Infinite Truth’.

Then you would need to take on the task of translating into English once more.

Furthermore, I now have the feeling that we should somehow use the insights from Insert No. 4 for the overall process; somehow articulate more concretely what this could mean for a communal intelligence.

Possibly, however, we first need to bring our software for the Democracy Lab project far enough along that we could then use the processes with such software as a ‘reference situation’.

CLAUDE 18

Yes, the image of the ‘belay point on a steep wall’ captures it well – it is a real point of insight, not a conclusion.

On the translation: send me the German text of the concluding section when you have finalized it, and I will translate it into English.

On the connection with the Democracy Lab: your instinct seems right to me. The philosophical insight – that knowledge has a process character and must organize its own provisionality – is exactly what a communal intelligence process would structurally need to achieve: citizens collectively extract structural knowledge about their municipality, but the system must be built in such a way that this knowledge remains revisable when reality changes. That would be the practical translation of ‘compulsion to abstract with simultaneous prohibition of fixation’ into a democratic process.

But you are right – without running software as a reference situation, this remains abstract. The software would provide the concrete framework within which one can show what such a process looks like: proposals are made, evaluated, revised, and the system preserves the process character instead of pretending to have reached a final state. Only then can Insert No. 4 truly flow back into the overall process.

So: first complete the philosophical section, then develop the software further, then build the bridge. Step by step.